AI racks are getting hotter, denser, and harder to cool. Many operators still try to stretch air cooling too far, and that creates cost, risk, and performance limits.

Yes. Liquid cooling moves heat away from AI chips more effectively than air, so it supports higher rack densities, better energy efficiency, and more stable GPU performance. I see it as essential for many new AI deployments, but not for every data center on day one.

The important point is not that liquid cooling replaces air everywhere. The real question is where it solves a problem that air cooling can no longer solve well. In my experience, that is the lens operators should use before they choose direct-to-chip, immersion, or a hybrid design.

What Is Liquid Cooling in a Data Center?

Air cooling feels familiar, so many teams assume it should be enough. But AI servers push far more heat into far less space.

Directly put, liquid cooling means using a fluid loop to collect heat from IT equipment and carry that heat away much more efficiently than room air can.

What liquid cooling means in simple terms

I explain liquid cooling in simple terms like this: instead of asking fans and room air to do nearly all the work, I bring a liquid much closer to the heat source. That liquid absorbs heat fast, carries it to another loop or heat exchanger, and then rejects it outside the rack or outside the building.

Why AI workloads create a cooling problem air can’t easily solve

AI changes the cooling equation because GPUs and AI accelerators concentrate power in a very small area. That creates much higher thermal flux at the chip and server level, and it drives far higher rack density than traditional enterprise workloads.

Liquid cooling vs traditional air cooling at a glance

I do not frame this as good versus bad. I frame it as fit versus mismatch. Air cooling is simpler and widely understood, but liquid cooling removes heat closer to the source and supports denser AI deployments.

| Factor | Air Cooling | Liquid Cooling |

|---|---|---|

| Heat removal point | Around server and room | Near chip, board, rack, or tank |

| Best density range | Lower to moderate | Moderate to very high |

| Retrofit difficulty | Lower | Higher |

| Efficiency at high density | Falls faster | Much more stable |

| Operational change | Smaller | Larger |

How Does Liquid Cooling Work?

Many teams know liquid cooling is more efficient, but they do not know where the heat actually goes. That makes design decisions harder.

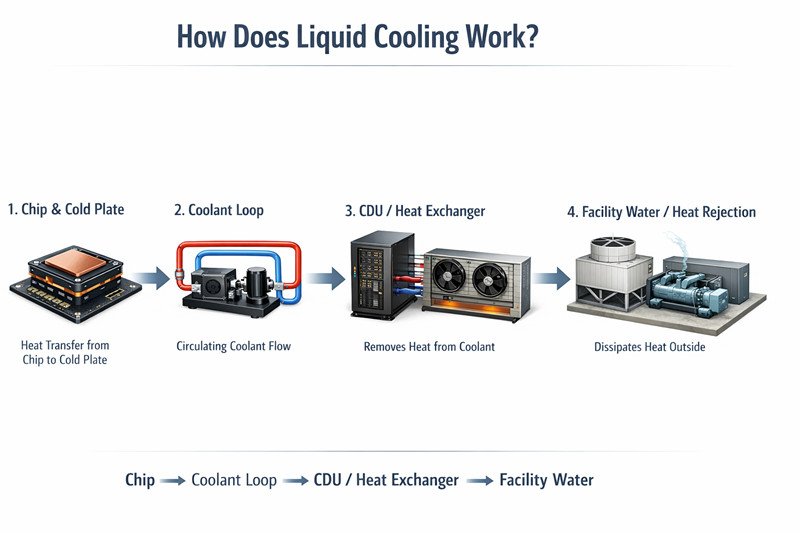

The heat path is simple: chip to cold plate or fluid interface, then to a coolant loop, then to a CDU or heat exchanger, and finally to facility water or another heat rejection system.

The heat path from GPU/CPU to coolant loop

At the server level, the hottest components are usually CPUs and GPUs. In direct-to-chip systems, a cold plate sits on those parts and draws heat into circulating liquid. This replaces the traditional air heat sink.

Key components: cold plates, manifolds, CDUs, heat exchangers, facility water

A typical system includes several layers:

- Cold plates attached to processors

- Manifolds and hoses distributing coolant

- CDUs controlling temperature and pressure

- Heat exchangers transferring heat to facility water

- Building-side cooling infrastructure rejecting heat outside

Each part plays a role in maintaining stable cooling for high-density compute.

Common coolants: water, water-glycol, dielectric fluids

Direct liquid cooling systems often use water or water-glycol mixtures because they move heat efficiently. Immersion systems use dielectric fluids since the liquid contacts electronics and must not conduct electricity.

What Are the Main Types of Liquid Cooling?

Operators hear many terms, and that often creates confusion. Not every liquid system works the same way.

The main types are direct-to-chip cooling, immersion cooling, and hybrid solutions such as rear-door heat exchangers.

Direct-to-chip (direct liquid cooling)

Direct-to-chip cooling targets the hottest components like GPUs and CPUs. Cold plates remove heat while other parts of the server still rely on air cooling. This approach integrates well with existing server designs.

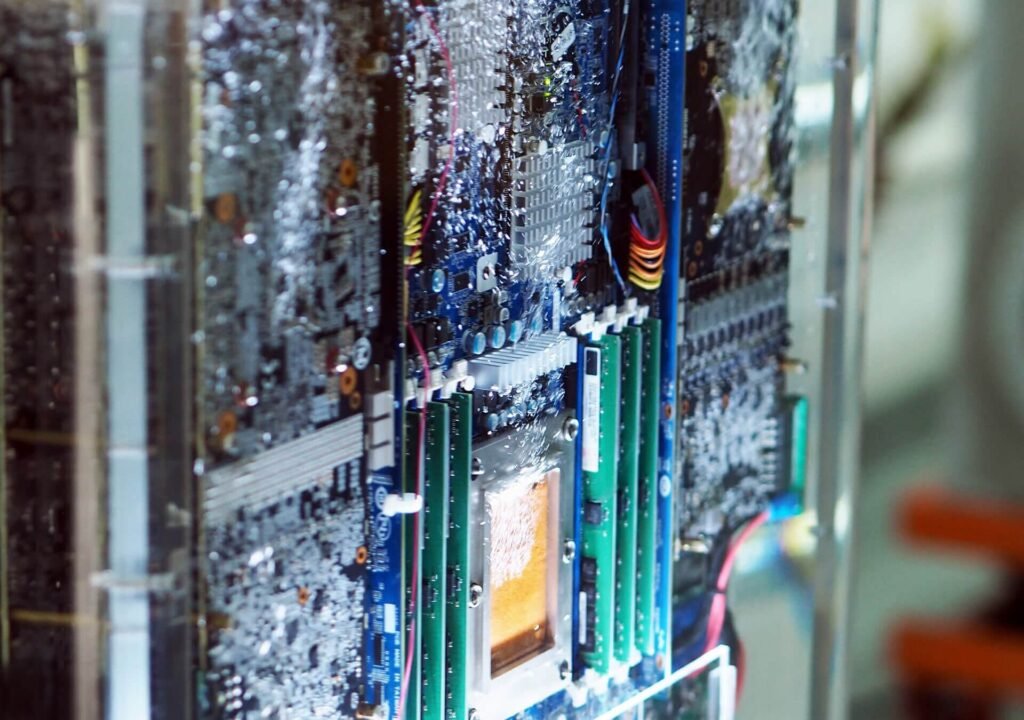

Immersion cooling

Immersion cooling places servers inside a tank filled with dielectric fluid. The liquid absorbs heat directly and transfers it to an external heat exchanger. This design can support very high rack densities.

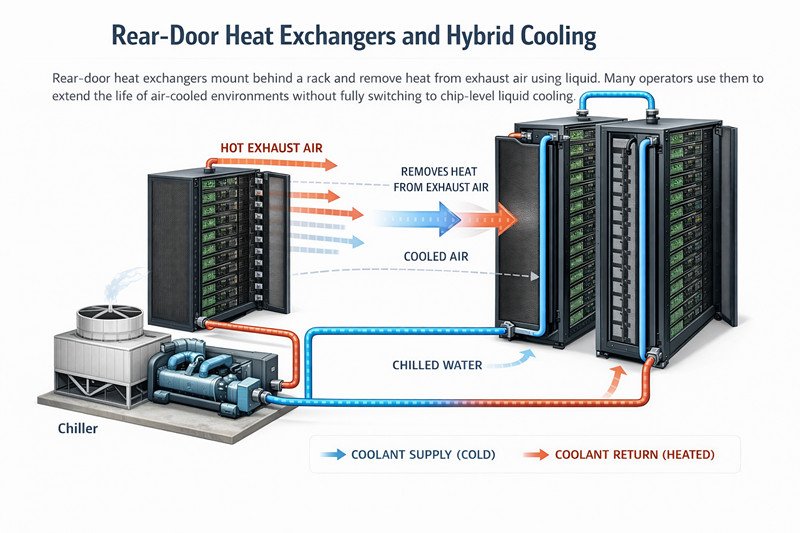

Rear-door heat exchangers and hybrid cooling

Rear-door heat exchangers mount behind a rack and remove heat from exhaust air using liquid. Many operators use them to extend the life of air-cooled environments without fully switching to chip-level liquid cooling.

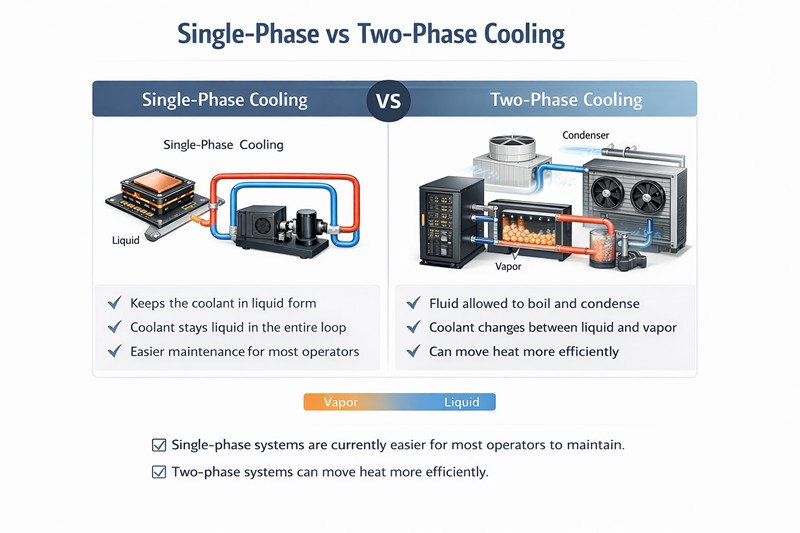

Single-phase vs two-phase cooling

Single-phase cooling keeps the coolant in liquid form. Two-phase systems allow the fluid to boil and condense to move heat more efficiently. Single-phase systems are currently easier for most operators to maintain.

Why Is Liquid Cooling Becoming Essential for AI Data Centers?

The traditional cooling model is under pressure because AI hardware generates much more heat per rack.

Liquid cooling supports higher densities, improves energy efficiency, and keeps accelerators operating within stable temperature limits.

Higher rack densities and thermal limits

Modern AI racks can exceed 100 kW of power. At that level, moving enough air becomes extremely difficult and expensive. Liquid cooling removes heat directly where it is produced.

Better energy efficiency and lower cooling overhead

Fans and mechanical chillers consume a large portion of facility power in traditional designs. Liquid cooling reduces airflow demand and lowers the total cooling energy required.

More stable performance for GPUs and accelerators

Thermal instability can cause GPUs to throttle performance. Liquid cooling stabilizes chip temperature, which helps maintain consistent compute performance.

Space savings and infrastructure optimization

Higher density means fewer racks for the same compute capacity. Liquid cooling allows operators to maximize power and space utilization inside existing facilities.

Direct Liquid Cooling vs Immersion Cooling?

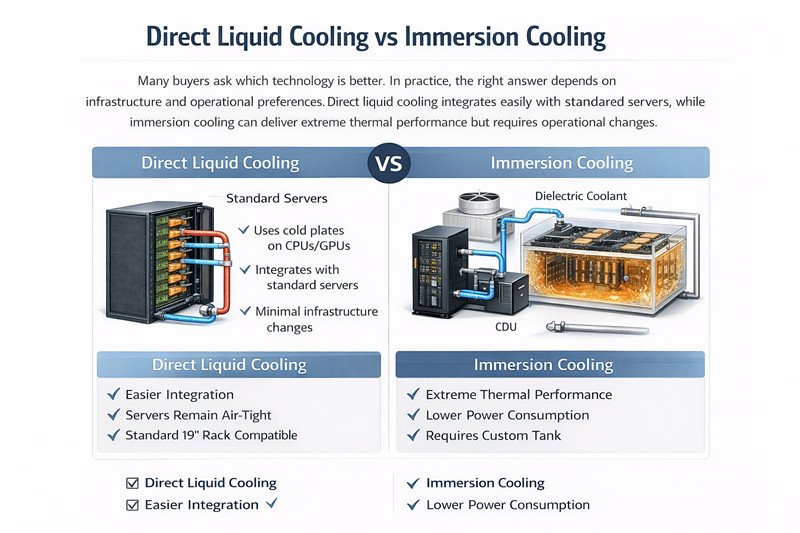

Many buyers ask which technology is better. In practice, the right answer depends on infrastructure and operational preferences.

Direct liquid cooling integrates easily with standard servers, while immersion cooling can deliver extreme thermal performance but requires operational changes.

How the two approaches differ technically

Direct-to-chip systems circulate coolant through cold plates attached to processors. Immersion systems submerge hardware in dielectric liquid.

Best-fit use cases for each

Direct liquid cooling works well for enterprise AI clusters and hyperscale deployments. Immersion cooling often appears in specialized HPC environments or extremely dense AI workloads.

Pros, cons, and tradeoffs for operators

| Topic | Direct-to-Chip | Immersion |

|---|---|---|

| Ecosystem compatibility | Strong | Limited |

| Density capability | High | Very high |

| Operational change | Moderate | Significant |

| Maintenance workflow | Familiar | Different |

When Do You Actually Need Liquid Cooling?

Not every data center requires liquid cooling immediately. Some workloads still operate comfortably with air systems.

You usually need liquid cooling when rack density and thermal load exceed what airflow can handle efficiently.

Signs air cooling may still be sufficient

Air cooling may remain viable when rack densities are moderate and airflow management is well optimized.

Thresholds where hybrid or liquid cooling becomes practical

When racks exceed typical power densities or when hot spots appear repeatedly, hybrid or liquid cooling becomes more attractive.

Questions operators should ask before choosing a design

Before switching technologies, operators should consider future rack densities, facility water infrastructure, operational skills, and vendor support.

What Are the Benefits of Liquid Cooling?

Operators often evaluate liquid cooling purely on energy savings. In reality, the benefits extend much further.

Liquid cooling improves thermal efficiency, system performance, and long-term infrastructure scalability.

Thermal efficiency

Liquid carries heat far more efficiently than air, which allows systems to handle high thermal loads.

Performance and uptime

Stable chip temperatures help maintain consistent GPU performance and reduce thermal stress on hardware.

Potential sustainability gains

Efficient heat removal can reduce total energy consumption and support waste-heat reuse strategies.

Long-term infrastructure scalability

As AI hardware grows more powerful, liquid cooling provides a scalable path for future data center expansion.

What Are the Challenges and Risks?

Liquid cooling solves some problems but introduces new ones. Operators must evaluate these risks carefully.

The most common challenges include upfront cost, operational complexity, and materials compatibility.

Upfront cost and retrofit complexity

Installing piping, CDUs, and liquid distribution systems increases capital expenditure and design complexity.

Leak risks, maintenance, and operational readiness

Operators must implement leak detection systems and train staff to handle liquid infrastructure safely.

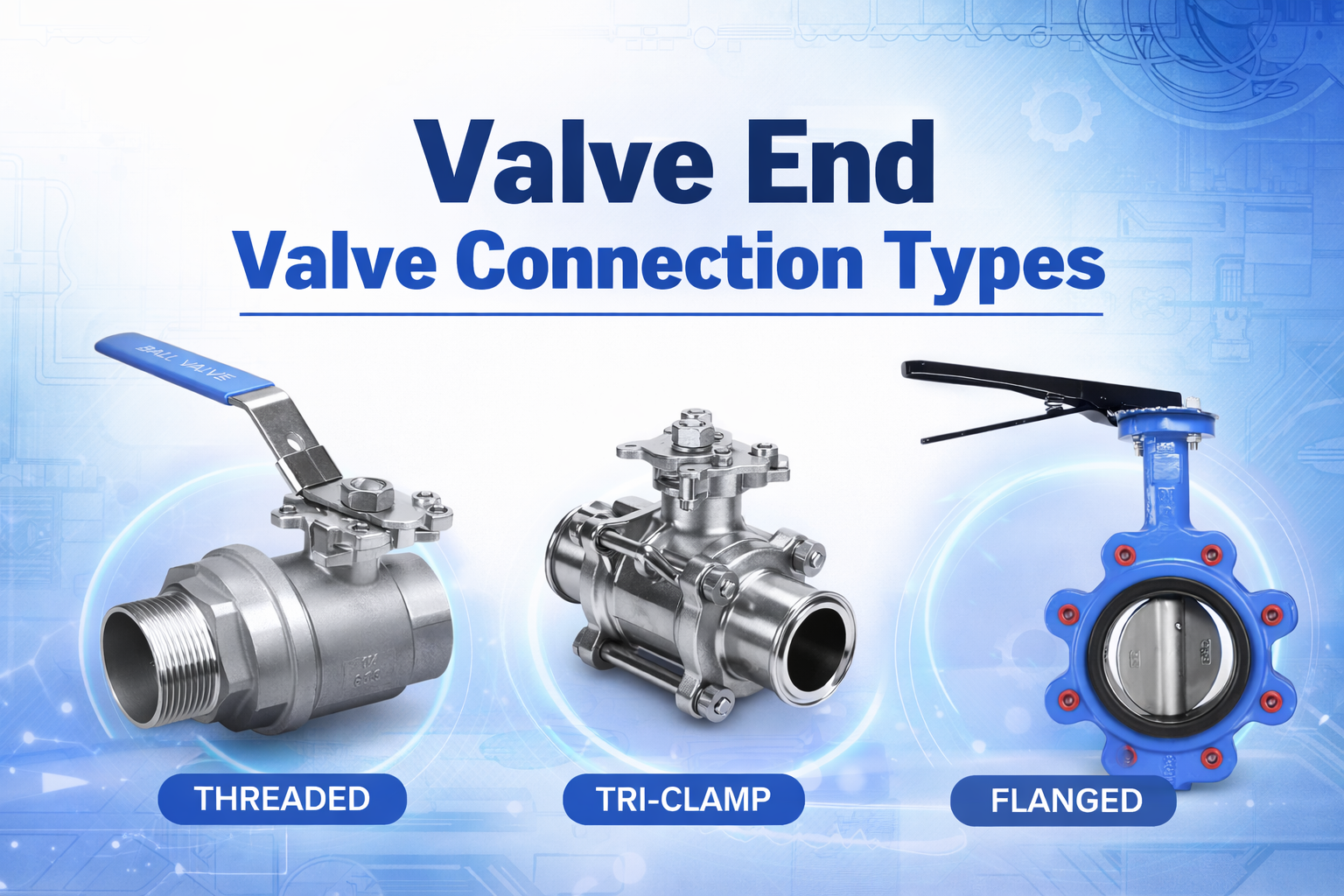

Materials compatibility and coolant management

Coolant chemistry must match the materials used in pipes, valves, seals, and fittings to avoid corrosion.

Vendor coordination, warranties, and service models

Cooling systems involve multiple vendors, which requires clear responsibility agreements and service procedures.

What About Liquid Cooling Economics?

Cooling decisions always involve financial tradeoffs. The goal is to balance capital cost with operational savings.

Liquid cooling often increases initial cost but can reduce operating expense and unlock higher compute density.

Capex vs opex

Liquid cooling requires investment in infrastructure, but reduced cooling energy can improve operational efficiency.

What affects total cost of ownership

Key factors include energy cost, density requirements, facility upgrades, maintenance needs, and downtime risk.

Where ROI is strongest

Return on investment is highest in AI training clusters, HPC environments, and high-density colocation deployments.

What Are Real-World Use Cases?

Liquid cooling is already being deployed across several types of facilities supporting high-performance computing workloads.

Hyperscale AI training clusters

Large AI training clusters often exceed the thermal limits of air cooling, making liquid systems essential.

Enterprise AI deployments

Enterprises use liquid cooling for high-performance AI workloads and large GPU clusters.

HPC and research environments

Research institutions have long used advanced cooling methods for supercomputing systems.

Colocation facilities preparing for AI tenants

Colocation providers increasingly build liquid-ready infrastructure to attract AI customers.

What Is the Future of AI Data Center Cooling?

Cooling technologies will continue evolving alongside AI hardware.

Hybrid designs combining air and liquid systems will likely dominate future deployments.

Why hybrid cooling will likely remain important

Hybrid architectures balance efficiency, cost, and operational familiarity.

Emerging innovations in chip-level and microfluidic cooling

Future designs may integrate cooling directly into chip packaging to manage extreme heat loads.

How cooling choices shape future AI infrastructure

Cooling architecture now influences data center design, rack layout, and power distribution strategies.

FAQ

Liquid cooling raises many practical questions for operators planning AI infrastructure.

Is liquid cooling better than air cooling?

For high-density AI workloads, liquid cooling usually provides better thermal management and energy efficiency.

What is the difference between direct-to-chip and immersion cooling?

Direct-to-chip cools specific components using cold plates, while immersion cooling submerges hardware in dielectric liquid.

Does liquid cooling increase water usage?

Not necessarily. Many systems use closed coolant loops that do not significantly increase water consumption.

Can existing data centers be retrofitted for liquid cooling?

Yes, but retrofit difficulty depends on building infrastructure and cooling architecture.

Is liquid cooling only for hyperscalers?

No. Enterprises, research facilities, and colocation operators are also adopting liquid cooling for high-density workloads.

Conclusion

Liquid cooling is becoming a practical requirement for modern AI infrastructure. I see it not as a replacement for air cooling, but as the technology that enables the next generation of high-density computing.

Beyond Fluid a leading supplier of stainless steel valve and fitting for data center cooling system. Contact Us for more information.